The early emergence of Artificial Intelligence is largely credited to computer scientist Alan Turing, who, in 1950, developed the not-so-creatively coined Turing Test. This ‘human evaluator’ was designed to test a machine’s ability to demonstrate intelligent behaviour to the point that it was indistinguishable from that of a human.

Put simply, if the computer could trick humans into thinking it is a human, then it had intelligence.

The test was, at a basic level, an adaptation of a Victorian-style party game called The Imitation Game. This game involved Player A (a woman), Player B (a man), and Player C (an interrogator of either gender).

Player C can’t see Players A and B and can only communicate via written notes. By asking questions, Player C needs to figure out which of the two players is a man and which is a woman. Only, in Turing’s test, one player was replaced by a machine, and it was up to the interrogator to reliably determine which player was human and which was not.

While the term ‘artificial intelligence’ would be coined years later in 1956, Turing’s earliest-known mention of ‘computer intelligence’ was in 1947 in a report where he posed the question as to whether or not it’s possible for machinery to show intelligent behaviour.

But had Turing’s ‘imitation game’ already set in place the notion that ‘thinking machines’ were designed to deceive or replace us?

After all, us humans have shown historic tendencies to fear technological change. Remember the Luddites from the first industrial revolution - British weavers and textile workers who were against the introduction of mechanised looms. They thought this technology was an insult to their craft and a threat to their livelihood - so they set about destroying it in the hope that their uprising would deter factory owners from installing it.

Through subsequent industrial revolutions we’ve seen recurrent pushback driven by fear: fear of mass unemployment; fear that humans will be replaced by automation. But what we’ve seen in reality, is that following each significant transformation comes mass economic growth.

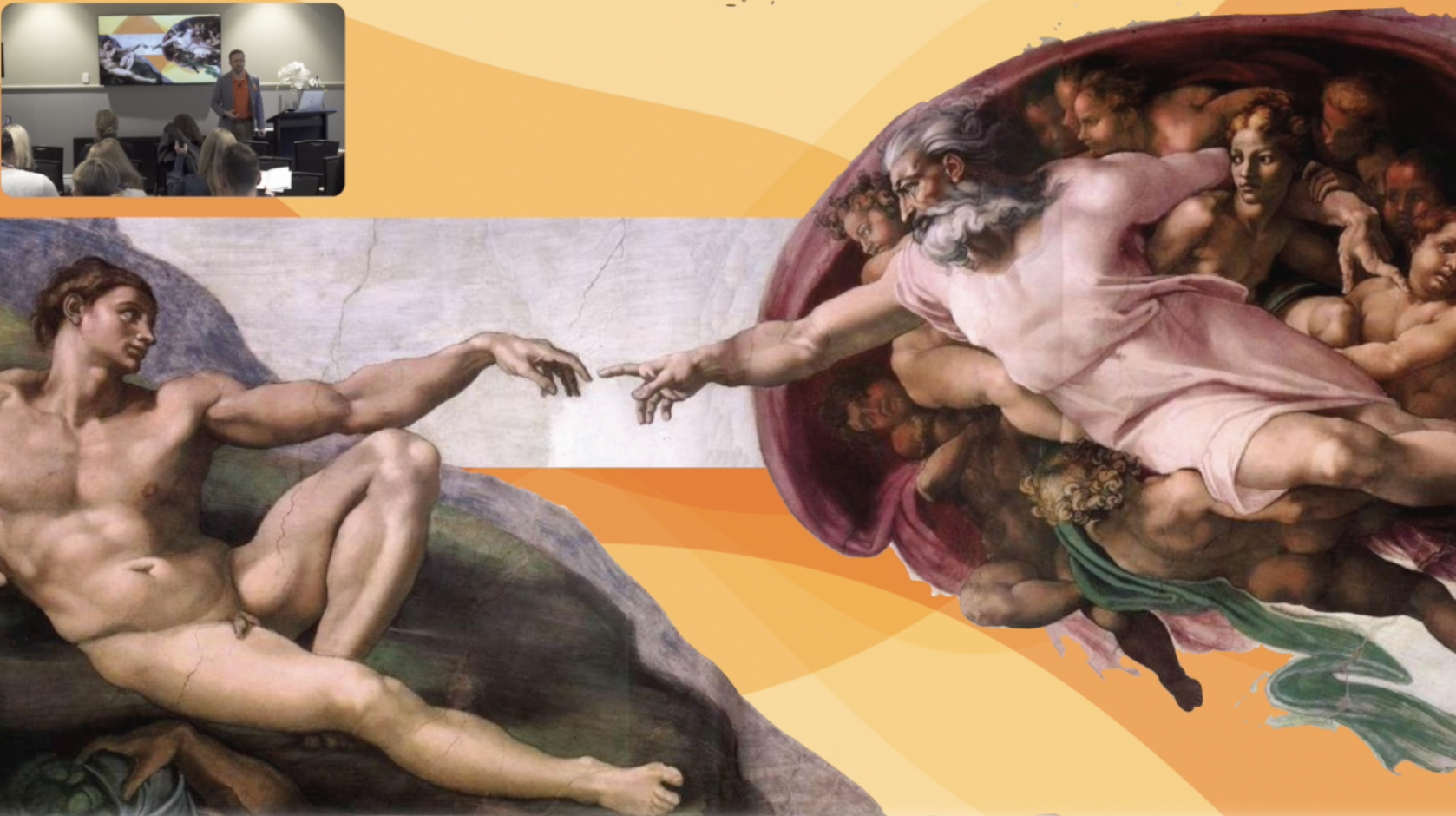

That’s because the outcome of each revolution - including the fourth that we’re now a part of (with the introduction of technologies that fuse physical, digital and biological worlds) - is that human capabilities are not so much replaced or mimicked, but supported and complemented. They’re given space for potential. They’re amplified and augmented.

While it might seem like semantics, reimagining artificial intelligence as augmented intelligence creates a small yet significant shift in perception. At Ambit, we define augmented intelligence as enabling a human to do a better job of the things they should be there to do, rather than getting consumed by repetitive, mundane and monotonous tasks. Backed by recent research that shows more than 80% of time spent by customer support agents is addressing the same 20 customer enquiries.

Augmented intelligence is all about creating the space for your workforce to solve the more difficult and challenging problems using the strengths that set humans apart - things like emotional intelligence, intuition, empathy, and creative thinking.

Has the misconception of artificial, rather than augmented, intelligence been holding you back from embracing opportunities that would actually support your workforce? When you consider technology as a means of enabling your employees and supporting the potential they could achieve for your business, the possibilities offered through augmented intelligence become required.

Could this change create a shift in what you see as possible for your business? Let’s start the conversation: www.ambit-ai.com/contact